How to handle millions of new Tor clients

[tl;dr: if you want your Tor to be more stable, upgrade to a Tor Browser Bundle with Tor 0.2.4.x in it, and then wait for enough relays to upgrade to today's 0.2.4.17-rc release.]

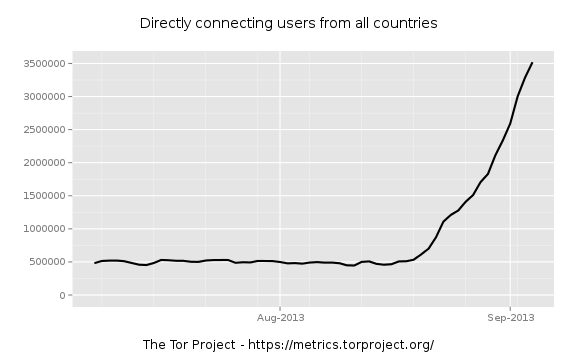

Starting around August 20, we started to see a sudden spike in the number of Tor clients. By now it's unmistakable: there are millions of new Tor clients and the numbers continue to rise:

Where do these new users come from? My current best answer is a botnet.

Some people have speculated that the growth in users comes from activists in Syria, Russia, the United States, or some other country that has good reason to have activists and journalists adopting Tor en masse lately. Others have speculated that it's due to massive adoption of the Pirate Browser (a Tor Browser Bundle fork that discards most of Tor's security and privacy features), but we've talked to the Pirate Browser people and the downloads they've seen can't account for this growth. The fact is, with a growth curve like this one, there's basically no way that there's a new human behind each of these new Tor clients. These Tor clients got bundled into some new software which got installed onto millions of computers pretty much overnight. Since no large software or operating system vendors have come forward to tell us they just bundled Tor with all their users, that leaves me with one conclusion: somebody out there infected millions of computers and as part of their plan they installed Tor clients on them.

It doesn't look like the new clients are using the Tor network to send traffic to external destinations (like websites). Early indications are that they're accessing hidden services — fast relays see "Received an ESTABLISH_RENDEZVOUS request" many times a second in their info-level logs, but fast exit relays don't report a significant growth in exit traffic. One plausible explanation (assuming it is indeed a botnet) is that it's running its Command and Control (C&C) point as a hidden service.

My first observation is "holy cow, the network is still working." I guess all that work we've been doing on scalability was a good idea. The second observation is that these new clients actually aren't adding that much traffic to the network. Most of the pain we're seeing is from all the new circuits they're making — Tor clients build circuits preemptively, and millions of Tor clients means millions of circuits. Each circuit requires the relays to do expensive public key operations, and many of our relays are now maxed out on CPU load.

There's a possible dangerous cycle here: when a client tries to build a circuit but it fails, it tries again. So if relays are so overwhelmed that they each drop half the requests they get, then more than half the attempted circuits will fail (since all the relays on the circuit have to succeed), generating even more circuit requests.

So, how do we survive in the face of millions of new clients?

Step one was to see if there was some simple way to distinguish them from other clients, like checking if they're using an old version of Tor, and have entry nodes refuse connections from them. Alas, it looks like they're running 0.2.3.x, which is the current recommended stable.

Step two is to get more users using the NTor circuit-level handshake, which is new in Tor 0.2.4 and offers stronger security with lower processing overhead (and thus less pain to relays). Tor 0.2.4.17-rc comes with an added twist: we prioritize NTor create cells over the old TAP create cells that 0.2.3 clients send, which a) means relays will get the cheap computations out of the way first so they're more likely to succeed, and b) means that Tor 0.2.4 users will jump the queue ahead of the botnet requests. The Tor 0.2.4.17-rc release also comes with some new log messages to help relay operators track how many of each handshake type they're handling.

(There's some tricky calculus to be done here around whether the botnet operator will upgrade his bots in response. Nobody knows for sure. But hopefully not for a while, and in any case the new handshake is a lot cheaper so it would still be a win.)

Step three is to temporarily disable some of the client-side performance features that build extra circuits. In particular, our circuit build timeout feature estimates network performance for each user individually, so we can tune which circuits we use and which we discard. First, in a world where successful circuits are rare, discarding some — even the slow ones — might be unwise. Second, to arrive at a good estimate faster, clients make a series of throwaway measurement circuits. And if the network is ever flaky enough, clients discard that estimate and go back and measure it again. These are all fine approaches in a network where most relays can handle traffic well; but they can contribute to the above vicious cycle in an overloaded network. The next step is to slow down these exploratory circuits in order to reduce the load on the network. (We would temporarily disable the circuit build timeout feature entirely, but it turns out we had a bug where things get worse in that case.)

Step four is longer-term: there remain some NTor handshake performance improvements that will make them faster still. It would be nice to get circuit handshakes on the relay side to be really cheap; but it's an open research question how close we can get to that goal while still providing strong handshake security.

Of course, the above steps aim only to get our head back above water for this particular incident. For the future we'll need to explore further options. For example, we could rate-limit circuit create requests at entry guards. Or we could learn to recognize the circuit building signature of a bot client (maybe it triggers a new hidden service rendezvous every n minutes) and refuse or tarpit connections from them. Maybe entry guards should demand that clients solve captchas before they can build more than a threshold of circuits. Maybe we rate limit TAP handshakes at the relays, so we leave more CPU available for other crypto operations like TLS and AES. Or maybe we should immediately refuse all TAP cells, effectively shutting 0.2.3 clients out of the network.

In parallel, it would be great if botnet researchers would identify the particular characteristics of the botnet and start looking at ways to shut it down (or at least get it off of Tor). Note that getting rid of the C&C point may not really help, since it's the rendezvous attempts from the bots that are hurting so much.

And finally, I still maintain that if you have a multi-million node botnet, it's silly to try to hide it behind the 4000-relay Tor network. These people should be using their botnet as a peer-to-peer anonymity system for itself. So I interpret this incident as continued exploration by botnet developers to try to figure out what resources, services, and topologies integrate well for protecting botnet communications. Another facet of solving this problem long-term is helping them to understand that Tor isn't a great answer for their problem.

Comments

Please note that the comment area below has been archived.

Maybe this will

Maybe this will help

http://blog.fox-it.com/2013/09/05/large-botnet-cause-of-recent-tor-netw…

With above in mind,

With above in mind, shouldn't the AV companies be able to add detection/fix to the db updates?

If they do, it will be interesting to follow the TOR stats for a few days.

Don't you think the malware

Don't you think the malware used to create this botnet has already been added to signaturebased malware detection of AV's? I mean, this botnet exists since 2009. The reason for currently more than a million zombie computers from this botnet on the TOR network is either unsufficiently / not at all protected machines, or the usage of advanced Trojans that use techniques to circumvent AV technology. In both cases, putting your hope on AV companies is not going to solve the problem for the TOR network. AV helps the ones running it properly, but it ain't fix the problems for a network that is used by a massive amount of badly protected zombies. I think Tor can better protect itself against these threats. I like the good old human-test, as part of a defense system. Kudos for the guys at Fox-IT for sharing their knowledge. If you know what is threatening your network, you sure can better protect it. Generally.

I downloaded the sample and

I downloaded the sample and scanned it utilizing VirusTotal, VirSCAN and Metascan. I was able to find that more than 20 of the included AntiVirus softwares did not detect the file as malware. I then used a malware submission index (list of submit pages and/or emails) and sent a copy of the file to each of those vendors. Hopefully that helps somewhat.

Thanks!

Thanks!

Whoa.

Whoa.

Words of advice from the

Words of advice from the movie Diehard: "Shut it down. Shut it down, now!"

Thanks. :) ...Did the movie

Thanks. :)

...Did the movie come with any more specific advice for this situation?

I'm watching the number of

I'm watching the number of relays .

I'm still downloading Die

I'm still downloading Die Hard 2, maybe they role out their plan in more detail...

Someone should convince the

Someone should convince the botnet owner to make all the bots run as Tor relays/exit nodes!

That would totally hose Tor

That would totally hose Tor anonymity, as recently discussed in Johnson et al (2013).

And then they have a good

And then they have a good chance of owning most circuits?

So actually reveal all nodes

So actually reveal all nodes of the botnet in publicly avilable list? Not sure if botnet owner is interested in doing that.

That's why convincing is

That's why convincing is needed.

Do'h.

maybe one could integrated

maybe one could integrated somthing like a "is it a human?" check at the begin of session. Similar to captcha for comments...

Having done this one could prefer users who use the new version with integrated check and have a positive check.

Not that user friendly but helpful until there is another solution...

And the servers running

And the servers running hidden services?

Somebody will need to check every time and solve captchas...

Yeah, that's one reason why

Yeah, that's one reason why it's harder than it sounds at first. :(

How about the botnet owner

How about the botnet owner makes all nodes relays, contributing to the network in addition to using it?

Random idea for long-term: before the handshake, a server could give the client a task to solve, expensive to compute by the client and cheap to verify by the server (e.g. hash). Once solved, they can proceed with handshake. That task can be proportionally complex depending on server load, so a server could manage the load it receives from clients asking for circuits.

Some people own last century

Some people own last century computers. This way they will not be able to use Tor network. I think it will not solve the problem.

You are right about the botnet owner. More relays are so welcome!

Would the mutli-million node

Would the mutli-million node botnet be less of a problem if say 10% of them became relays? Ignoring the ethical dilemma and public relations disaster of accepting hijacked resources into the Tor network of course...

ps If botnets run relays,

ps If botnets run relays, you can add a new class of user to the "Who uses Tor" page, not to mention a new class of legal problems to solve...

Can I Download 0.2.4.17-rc

Can I Download 0.2.4.17-rc release later? Or must run Tor as a relay to download?

https://dist.torproject.org/

https://dist.torproject.org/

For the convenience of us

For the convenience of us "advanced" users who still do not compile their own, would you please include a win32-binary 'tor.exe' along with its checksum, among the downloadables ?

I mean, can't find it yet under :www.torproject.org/dist/win32/

:=(

Cheers and Thanks

--

Noino

Hey Noino, There's usually

Hey Noino,

There's usually around a 24h lag between package creation and release. If you want to have earlier access to packages, you can sign up to the tor-qa mailing list and get them about a day before they are released. I send them there first and generally give our testers 24h to let me know if they find any problems. The list is here:

https://lists.torproject.org/cgi-bin/mailman/listinfo/tor-qa

That said, the 0.2.4.17-rc packages are now available on the website.

Please take a look in

Please take a look in litecoin.

Seems that a huge botnet is mining litecoin.

forum.litecoin.net/index.php/topic,5693.0.html

Desperate to peddle

Desperate to peddle counterfeit bitcoins, aren't we :) ?

Two things: One, take a

Two things:

One, take a look at this graph - if this mining "pool" (not really) is associated with the Tor client jump, then it's likely the periodicity of this graph provides useful data with which to infer the deeper causative relationship:

https://forum.litecoin.net/index.php/topic,5693.msg44523.html#msg44523

-

Two, if this is a mining botnet custom-coded for just that, then "c&c" becomes a much different issue. Basically it involves passing hash values back to be validated - or if they are locally validated on the individual zombie machines, then only passing "up" to the network controller the successful hash results. Which would not make much network traffic - but could result in alot of parsimonious sessions as hashes (small bits) are checked against a central resource and a result (very small bits) is passed back to the zombie machine.

In any case, folks with more firsthand in admin of specific mining toolsets likely can clarify the precise mechanics. What's clear is that it would exhibit qualitatively different characteristics than the usual botnet: it's not pushing DDoS packets, for example, so there's no flood of traffic being generated - ever.

-

Our $0,02 is that this isn't .gov - it doesn't match the fingerprint, nor any likely-scenario disinfo fingerprints. The question to ask is what sort of botnet activity would find substantial benefits to having some comms natively routed through Tor hidden services. Obviously, DDoS doesn't match that profile... but it seems probable that something does. Narrow down, via logical interpolation (which is to say, assume botnet operators are smart - because they are) to the sorts of activities that would be well-served by inclusion of Tor in their topological model, and we're likely closer to finding the needle in a much smaller haystack.

What benefits from a bunch of nodes, talking via Tor hidden services but not saying much? What kinds of comms would be particularly well-protected against traffic analysis attacks by burying them within the Tor network itself? What techniques do botnet researchers use to uncover botnet admin infrastructure that would be less viable if the botnet itself was masked within Tor hidden services?

Smart people do things for good reasons - we may not yet know those reasons, but we know they exist. Someone smart made this design decision and has implemented it in a real-world botnet; surely, she had good reasons for doing so. Understand the reasons, and we understand eventually the activity to which she puts her botnet. Q.E.D.

-

~ pj | http://cryptostorm.is

==- It should be obvious.

==-

It should be obvious. The botnet operator is hiding his command/ctl server behind tor so it can't be found and followed to him personally.

-faye kane ♀ girl brain

Is it so obvious? If you

Is it so obvious? If you control three million computers owned by random people throughout the world, why not use them to build your own anonymity network and hide your server in there, instead of hiding in a relatively tiny network of 4000 volunteers?

The "hashcash" (Google it)

The "hashcash" (Google it) is pointless because a botnet client has the same resources like a normal user.

The real answer is to remove

The real answer is to remove hidden services from the default tor software. Hidden services can be delivered through an add-on to the tor network.

I'd love to see a design for

I'd love to see a design for that where it supports introduction points and rendezvous points too. Seems like those features need support in the core relays. But I hope I'm wrong!

Great Information. Thx

Great Information. Thx

As I've said in a lot of

As I've said in a lot of places, you need to look beyond the overall users graph and look at the geographic distribution as described by, for example:

https://metrics.torproject.org/users.html?graph=userstats-relay-country…

The overall pattern does not look like a botnet to me. It's way too even and consistent over a large range of countries including ones with tiny numbers of computers, while omitting some very strange choices. (China, Israel for example)

China isn't showing bots

China isn't showing bots because the Tor clients there, without pluggable transports, can't reach the Tor network.

So, the botnet's (assuming it is a botnet of course) nodes in China are being censored. Same with Iran.

What about Israel

What about Israel then?

Daily users based on requests to directory mirrors and directory authorities have been flat in 2013, except for a large spike during 2013-02-15 through 2013-02-25. There's been no increase since 2013-08-19. That's strange.

https://metrics.torproject.org/users.html?graph=userstats-relay-country…

But Israel? And again, it

But Israel?

And again, it seems way too even otherwise. Proportionate to internet users, you'd expect a huge signal from Russia, and not much from the UK and Vatican city, say, but that's not the case.

Yeah, I don't have any

Yeah, I don't have any answers. More answers would be useful!

Perhaps it was a Tor

Perhaps it was a Tor research project. But I'm sure that someone would have already mentioned that, even if nothing has yet been published. Also, 40K clients would be nontrivial for a research project, even using VMs. And conversely, infecting and cleaning 40K random machines would also be nontrivial.

This spike in Israeli Tor users only shows up in the beta estimates. Perhaps it's just an artifact in the beta estimates.

40k clients? We are talking

40k clients? We are talking many millions here.

40K is indeed small relative

40K is indeed small relative to many millions. Even so, creating 40K clients during 2013-02-15 through 2013-02-19, and removing them again during 2013-02-23 through 2013-02-25, was an impressive feat.

Perhaps it was a pilot test, or another much smaller botnet.

Problem with some of these

Problem with some of these defenses is that they would deny legitimate research on the live net. Or even something as simple as polling onions for uptime.

FBI-Skynet- The raid on

FBI-Skynet-

The raid on FreedomHost -Aug 5- when they left a weaponized exploit to Firefox users running Windows systems - maybe the code has morph into an Tor-net with a little help - Timing is everything - my 2 cents

Seems unlikely that it's

Seems unlikely that it's related, especially since it looks like this botnet has been around in some form since 2009.

That said, I admit that these days every time I call something a crazy conspiracy theory, it's increasingly turning out to be true. :)

This botnet is mining ltc...

This botnet is mining ltc... just saying

Evidence, details,

Evidence, details, anything?

(If it is, maybe they should make it contact its mothership less often.)

I don't think so:

I don't think so: http://ltc.block-explorer.com/charts

The difficulty didn't change much over the last couple of months.

If the botnet admin decides

If the botnet admin decides to push a button to make his bot to start tx/rx data, can he take the whole Tor network down?

Very likely yes. Let's hope

Very likely yes. Let's hope they don't choose to destroy the system they're experimenting with.

(If it happens, there are some next steps we can take. But none of them are good or easy.)

Still no Windows build? How

Still no Windows build?

How can someone find the latest alpha Tor Browser Bundle for Windows?

If I was new, I'd go to torproject.org, then click on the Download link https://www.torproject.org/download/download-easy.html.en then click on Looking For Something Else? View All Downloads but there's no link to the unstable TBB from there?

https://blog.torproject.org/c

https://blog.torproject.org/category/tags/tbb-30 points to the experimental Tor Browser Bundle 3.0 releases.

For the more traditional TBB 2.x releases, go to https://www.torproject.org/projects/torbrowser.html.en#downloads and then scroll down to the "Development Releases" one.

Arma, can you provide a

Arma, can you provide a spreadsheet of the increase in users, broken down by country, with the actual country names please. (Or just the table that converts the country codes to names.) I want to do some mapping of where this increase is coming from, as a proportion of internet users. I think that would help a lot.

Check

Check out

https://lists.torproject.org/pipermail/tor-talk/2013-August/029665.html

Or if you want to make your own newer ones, see the links to CSV files on

https://metrics.torproject.org/users.html

See

See http://dev.maxmind.com/geoip/legacy/codes/iso3166/

Pardon my potential

Pardon my potential ignorance here, but in what way is this not effectively a DDoS attack on TOR itself, and might that not be the primary (or sole) objective of the BotNet owner?

If the botnet owner wanted

If the botnet owner wanted to ruin the Tor network, he/she would be sending and receiving lots of traffic from each bot too. The fallout so far has actually been pretty benign relative to what it could be.

That said, it is fair to call this a DDoS on the Tor network. But quite possibly an accidental, or at least unintentional, one.

I'm hoping the botnet owner

I'm hoping the botnet owner will sympathise, and turn his multi-million tor clients into exit nodes!

Not wishing to spam, but

Not wishing to spam, but maybe my earlier request has been overlooked : is it possible for you to post Tor 0.2.4.17-rc (just a win32 tor.exe & sample ini files - NOT the full bundle) ? Please !

No? I don't run Windows or

No? I don't run Windows or do the bundling. And our bundles people are crazy overloaded right now. :(

In the future I hope it will get better:

https://www.torproject.org/about/jobs-lead-automation.html.en

In the mean time, can't you just fetch the bundle and pull out the parts you want? That way you'll be able to check the signature on it too.

What if you implement

What if you implement something like recaptcha but for processing. Recapcha sends two captchas at once. One it knows the answer of and one it does not. What if you distributed the computation to some nodes with a mixed bag of known calculations and unknown ones.

If you send out the same computation to enough nodes you will be able to get the result safely

The relay can black list any nodes with calculations that comeback that are known to be wrong.

The relay can spot check some computations itself

It does not make sense that you have a whole botnet of resources and CPU is your bottleneck :D I guess trust is important, but I think with a combination of the ideas above you can mitigate the risk considerably and drastically reduce the load on relays.

I'm pretty sure this is a

I'm pretty sure this is a DDOS attempt by Anonymous targeting some child porn hidden services. They were talking about it on 4chan, and had put a botnet onto it. Yesterday on one of the pedophile chat boards (which was one of the ones targeted and difficult to reach) there was a message on the front page talking about some kind of HTTPS attack or flood and the board was going down for now because it was pretty useless to use.

Doesn't make much sense. If

Doesn't make much sense. If they wanted to DDoS a particular hidden service, they should run a smaller number of Tor clients and make each of them do more stuff.

The current activity, of running way way too many Tor clients and making each of them do almost nothing, doesn't seem to fit with your theory.

That said, it would be great to hear more details.

This may be true but the

This may be true but the fact remains that Tor can't handle DoS attacks which makes it a poor anti-censorship platform.

Answer #1: please

Answer #1: please help!

Answer #2: did you have a better anti-censorship platform?

Five million bots is a lot of bots.

Hi staff, what really are

Hi staff, what really are you doing for timing attacks analisys for the Tor network? Thanx

Read

Read http://freehaven.net/anonbib/#active-pet2010 plus its related work section, and then help contribute to the field. Right now there are no good answers.

NSA trying to make a mess

NSA trying to make a mess with TOR with all americans as their zombies?

It is funny to see what american government is doing with its citizens. And with rest of the world. First they create terrorism, and then they"solve" it.

What is the possibility that

What is the possibility that government agencies are trying to some how use tor to sniff out something about those who are using TOR?

Hm. Maybe? I don't see how

Hm. Maybe? I don't see how the attack would work though. So you'll have to be more specific.

In any case, seems to me that they've got better attacks if they want to do it.

believe that the worst

believe that the worst possible scenarios one can imagine is exaxtly what is beng done against citizens of various nations.

No government agency wants their status quo on information to ever be compromised by intelligent individuals who are sick.of being spied on by the very hypocrites who perform atrocities across the globe.

Imagine the intent of governments whos very intention is to know every aspect of its citizens?

Its disgusting.. And guess what? Citizens flip the bill.. Remember that!!! So the irony isnt lost at all here..

We pay our country to do this. And also sit and watch them literally perform treason and terrorism on live tv..Blame non existent enemies all for show obviously.. And unfortunately the "sheep", you know, those imbeciles that claim intelligence but believe fate is in gods hands! Ya those fucks...The fate has been in plain view" in the hands of tyrants for.a very long time now, and god wasnt there.to stop it, and neither were the countless followers of lies.

Botnet is right. I have

Botnet is right. I have proposed that it is DormRing2, what I am calling Rotpoi$on. https://b.kentbackman.com/2013/07/25/rotpoion-12-hour-packet-capture-of…

http://cryptome.org/2013/08/t

http://cryptome.org/2013/08/tor-users-routed.pdf

If i remember correctly i saw an idea for an attack by which an adversary would overload non-malicious nodes with bandwidth so when tor wants to make a circuit, it would choose nodes with more available bandwidth. the adversary would position themselves as a reliable entrance and edit node and comprise the user.

could this new increase in uses have anything to do with this?

also what are our thoughts about this new research paper?

For your congestion attack,

For your congestion attack, see

http://freehaven.net/anonbib/#torspinISC08

and

http://freehaven.net/anonbib/#congestion-longpaths

For the upcoming CCS paper, I wrote a short paragraph about it on the tor-talk mailing list, and I'll hopefully find some time soon to write more. In the mean time, read http://freehaven.net/anonbib/#wpes12-cogs while you're waiting.

It is probably the American

It is probably the American spy network the NSA preparing to destroy the TOR system.

This statement makes no

This statement makes no sense.

(Sorry, I'm all for conspiracy theories these days, since there are in fact so many conspiracies. But good conspiracy theories start with some facts.)

Why no sense? Running botnet

Why no sense? Running botnet on Tor is very good way to make Tor reputation even worse that it is. Right now 80% of tor nodes are zombies. Which are used to DDoS (not through Tor!), send spam etc. This started me wonder if I really want to run relay node... What is real good guys/bad guys ratio right now? 1:5 at best. Probably much worse.

And for network ISPs there's an easy way to disable this botnet - just blackhole all relay nodes. And the nodes are listed on public lists. For the antivirus companies? Block communication with those nodes too. Just in case.

IMO this could be very smart way for someone who wants to kill Tor network. And I hope this will be fixed soon.

Isn't a captcha just another

Isn't a captcha just another step in the arms race? There are quite a few banking trojans out there that overlay parts of browser UIs or currently rendered websites and capture input from the user. The bot devs can just forward any captcha this way and use the victim to solve it.

It is all part of the arms

It is all part of the arms race yes. The captcha approach, and the attack you describe on it, sound like a pretty rough step in the arms race for both sides.

From a users points of view

From a users points of view captcha is easy to implement into Tor Browser but it's hard to using for non-browsers type of traffic, torifications without any interactivity: tor routers, toryfication of ssh-clients etc

Many users have told that

Many users have told that this influx of bot or something else has not effected their network, but I am unable to maintain connection over TOR for the last 4-5 days. Now constrained to use just proxies. Please do something in this regard as I have a few documents to send to our team.

First, switch to a TBB that

First, switch to a TBB that includes Tor 0.2.4.x. Then keep being patient as more relays upgrade to 0.2.4.17-rc.

It's a "man in the middle"

It's a "man in the middle" attack. Or rather a "botnet in the middle" ;-)

More like a

More like a botnet-on-the-edges attack. Which doesn't sound as cool, I agree.

Agreed looks that way?

Agreed looks that way?

Let's say that the botnet

Let's say that the botnet isn't attacking Tor, but rather just testing how many bots it can handle. And let's say that perhaps 10% of the bots have enough resources, uptime, etc to be good relays, and even guards. How many colluding bot relays could Tor handle before the botnet would control most circuits?

Interesting write-up, thank

Interesting write-up, thank you!

Do you know when the updated version will hit your Linux (esp. Fedora) repositories?

If an adversary running a

If an adversary running a large amount of relays was quick to upgrade all their relays to 0.2.4.17 after its release, wouldn't there have been an amount of time where the probability would be much smaller that a circuit built would use only his relays? Before other relay operators had a chance to upgrade?

You mean much larger, I

You mean much larger, I think.

Tor relays have been able to process ntor cells since 0.2.4.9-alpha:

https://gitweb.torproject.org/tor.git/blob/tor-0.2.4.17-rc:/ChangeLog#l…

And there was that period of time. We solved it by having Tor clients start out not using NTor handshakes by default even if they could, and later we set a consensus parameter to signal for clients to start using it, once enough relays had upgraded.

But that was months ago. Tor 0.2.4.17-rc doesn't contain a new capability. It just re-orders which create requests the relay processes first.

You're right, I did mean

You're right, I did mean larger.

This makes sense, thanks.

Looks like the gov & spies

Looks like the gov & spies got frustrated with tor so a botnet for deny the service attack:)

Indeed. If you like your

Indeed. If you like your conspiracy theories, how about "Roger had allocated this past week to work on some urgent Tor anonymity fixes, so the feds asked the botnet operator to distract him."

(Surely there's no way it's true (surely!); but it makes for a good story.)

Please fix the Tor for the

Please fix the Tor for the TAILS system. Pass along. thanks

arma, imagine the botnet

arma, imagine the botnet owners have 2 (or a few more) powerfull relays.

The botnet can be used to DoS some relays at time, increasing the traffic on their relays, which also increase the probability to deanonymize someone.

Correlate a few relays' traffic is much easier than a million relays.

Would it be a problem?

Yes. See

Yes.

See https://blog.torproject.org/blog/how-to-handle-millions-new-tor-clients…

If a large intelligence

If a large intelligence agency could compromise TOR they would carry it out in a way that would seem like a benign botnet activity on the network. They could have a combination of intra-tor activity in combination with extra-tor activity, i.e. network analysis, encryption breaking, zero day attacks (perhaps yet to be carried out), and so fort. So the activity inside tor may have a undecipherable purpose if it is only part of a much more sophisticated attack.

Also of note is that DOSing TOR wouldn't be productive as the purpose of such an attack would be to lift anonymity, not simply stop people using TOR; i.e. you would want people to continue to use the network in order to identify them.

This isn't evidence an intelligence agency is behind the attack, just that the whole point of such an attack would be to not leave any such evidence. So, if you have an assume-the-worst security policy you should assume TOR is perhaps partially compromised by whoever is behind the botnet.

As mentioned in the post a million node botnet can easily anonymize itself in a much better way, so mapping or testing or researching or implementing an attack for TOR seems as plausible an explanation.

Would a VPN connected to the

Would a VPN connected to the TOR network be any more secure? I mean, they'd just phish the VPN IP address, Oh well.

Maybe? Defense in depth can

Maybe? Defense in depth can be good.

Is it that the bot net

Is it that the bot net operator(s) are using this as a peer-to-peer anonymity system for itself or is it just they want to hide their C&C center from the Internet?

Is the Tor Network of

Is the Tor Network of interest for the IA ? It transports internet traffic, so it should be.

Can the IA get the data it wants from the traffic routed through tor? With access to the internet traffic and enough realy nodes/exit nodes you can match some tor input traffic to some tor output traffic. But you cannot match all.

Assuming the IAs want to use their resources effectively, less tor traffic, easier and more effective surveillance.

Tor knows its vulnerable, as said above, so development is needed to counteract and harden tor traffic. This is of interest for the IAs to avoid, or they will loose control of an interesting part of internet traffic the actually have control of.

So looking at the smooth geographic distribution of new clients, how smooth they were activated during the last two or three weeks. Do you really think IAs are sitting there while more and more documents are released with classified information, knowing there is THE anonymity tool and do nothing about that?

Only scipt kiddies kill a service, intelligent people keep the developers of such an important service of free speech and freedom busy so they cannot fix the open wholes in their service.

For secure operations: do it so no one thinks a security agency did it. This goal looks fullfilled to me at the mom.

Second: Tor is disabled and the devs are busy - important goal reached.

my 2 cents ..

network grew because in

network grew because in Russia by the "law" closed torrent trackers rutor.org, nnm-club.ru and more other amazing site))

but when closed rutracker.org wait for the explosive growth of straight up))

Please we need that you'll

Please we need that you'll speed up the upgrade to a more safe way to keep us safe than the 1024bit!!! :-(

This one's really easy. Run

This one's really easy. Run Tor 0.2.4.x as your client. Done.

Are you affected by the

Are you affected by the Bullrun project by NSA?

You might

You might like

https://www.torproject.org/docs/faq#Backdoor

and

https://blog.torproject.org/blog/calea-2-and-tor

So no, they haven't come to tell us to weaken anything in Tor.

But Tor relies on underlying security libraries like OpenSSL, which is fine as far as I know, but security and crypto libraries sure are complex these days.

I don't think they would be

I don't think they would be interested in attacking Tor with a massive botnet.

They would rather decrypt it, which they can probably do right now. Isn't that the "technological breakthrough" they're drooling over?

I doubt it. Read the rest of

I doubt it. Read the rest of the articles you're quoting from -- it's much more likely that intelligence agencies have good attacks against the endpoints than that they have some fundamental break on the crypto.

I mean, heck, see

https://blog.torproject.org/blog/tor-security-advisory-old-tor-browser-…

(And I don't even think that was NSA.)

The articles *I've* seen

The articles *I've* seen imply that, yes, they already *have* cracked the vast majority of encryption. Bear in mind they have been spending $hundreds of millions/yr on this for awhile now and, from being associated with Govt activity in the past, what we think they have and what they *do* admit to having is always several years behind what they *do* have now. Safest to act as if it's all been broken into.

Sounds like you should learn

Sounds like you should learn more about the state of the art in crypto rather than believing articles you read. I can find some article that says just about any conclusion I'm looking for.

That said, if you think all crypto is broken, that's a valid (though currently unpopular) guess. In that case I suggest getting off this Internet thing (or at least not using it for anything sensitive) until the situation changes.

I think the sudden rise in

I think the sudden rise in tor clients is more likely due to the 10th anniversary of PirateBay and the release of piratebrowser!

That would be fantastic

That would be fantastic (relatively speaking). But unfortunately, very few of the facts point to this being the case.

In the following essay,

In the following essay, Bruce Schneier seems to caution against using elliptic curve crypto... can someone with expertise in crypto comment on that notion (given that the new nTor handshake is ECC based).

http://www.theguardian.com/world/2013/sep/05/nsa-how-to-remain-secure-s…

5) Try to use public-domain encryption that has to be compatible with other implementations. ...

... Prefer symmetric cryptography over public-key cryptography. Prefer conventional discrete-log-based systems over elliptic-curve systems; the latter have constants that the NSA influences when they can.

Here is a link to a

Here is a link to a collection of several essays on ECC, its background, the infamous NIST episode from 2006, and a couple of comments on the implication for 2013-era crypto implementations:

https://cryptostorm.org/viewtopic.php?f=9&t=3443

Note that quite a bit of the content there comes from Bruce Schneier's blog itself - http://www.schneier.com - which is certainly a canonical resource for this and many other crypto-related subjects.

tl;dr is that ECC works, as a class of cryptographic transforms. However (and there's always a however, post-Snowden)... poor curve selection can torpedo security very easily. And poor curve selection has been subtly "nudged" towards default by the NSA for quite a few years - decades, perhaps. So doing ECC requires a more than passing knowledge of the guts of the technique itself... which is not true of most public key stuff, and which is trivially easy for RSA (only slightly less simple for DH). It also requires a paranoid distrust for any "official" recommendations as to curve parameter selection - which is default for those coming out of the *punk world, but considered close to heretical by some more formally-schooled cryptographic practitioners. Which is interesting.

I'll not claim to be reading Bruce's mind on this. Rather, this is our own team's summation of the existing data. NOTE: Bruce, however, has seen the underlying documents on the recent disclosure - so it's possible he knows something none of us do (yet). I feel that's unlikely for a number of reasons, but it's not impossible at all.

There are scads of cryptographers who have forgotten more about ECC than yours truly has ever learned, despite reading some of the underlying research papers over the years and making a good-faith effort to digest the general concepts. I've not yet seen one of those A-grade crypto specialists wash their hands of ECC as a _class_ of cryptographic tools... but I've seen just about every one warn that a failure to configure ECC just right will make it very easy for NSA-level attackers to subvert.

ECC is (currently) hard-bound to TLS 1.2... so unless you're willing to throw out a rather crucial baby with some imperfectly-pure bathwater, you'd best come to terms with it and how to use it wisely. That's my own personal $0.02, fwiw.

~ pj | http://cryptostorm.is

Tor uses Curve25519 designed

Tor uses Curve25519 designed by Daniel J. Bernstein not the NSA.

http://cr.yp.to/ecdh/curve25519-20060209.pdf

I think the combination of a

I think the combination of a social and a technical improvement is the best solution to the problem. The social solution would involve working with other projects to increase the number of potential Tor users and relays. The technical solution is to increase the performance of handshakes. If you can get projects such as Trisquel, Debian, Ubuntu, Linux Mint, and others to bundle Tor Browser and/or even just Tor it could increase the size of the network. I think the way to do that would be to include it as an option during the install. A quick explanation of what Tor is (a tool to help increase anonymity), what the negative effect of enabling it will be (a slight performance degradation, which means we need a configuration that won't negatively impact streaming videos, or will at least react to that, won't be a drain on users bandwidth, so a reasonable cap, maybe 10GB?), and what the benefits of enabling it will be (improvement of ones own anonymity when used and that of others).

Of course this also assumes that you can code Tor to automatically open ports on the router (making it easy enough for the typical web user) using something like UPnP and that there are enough users with routers which have this supported and enabled by default. I'm not familiar with UpnP protocol and maybe this isn't what it does/enables programs to do.

Actually, the sudden surge

Actually, the sudden surge in Tor use has resulted from some major information leakage in Japan. The origin of the leakage being located on a Tor server, the bbs service has got much attention since then by thousands of the Japanese people. They came to know Tor service for the first time. After some cracker sneaked into a Japanese biggest bbs service, 2 Channel, and stole some of its log files, he made them open on the Tor bbs. Since the log files include not only access information of the users counting as many as forty thousands but some of their important personal data, this soon caught many viewers. We soon saw other services also on Tor network providing those personal data to get much traffic. The incident has not been solved yet, and the people seem to get bored after the news of our hosting the Olympic Games in 2020. You may expect rather higher traffic for the next couple of weeks.

Either chop off the bot net

Either chop off the bot net client version or require capcha because this is just ridiculous. The bot net owner is playing us for fools. We cannot go on like this.

The client version is

The client version is 0.2.3.25, the current stable.

We could chop it off. That would make most users fail to use Tor. Maybe they'd go away thinking Tor doesn't exist anymore, or maybe they'd upgrade.

But what if the bot upgrades? How often can we ask our users to switch software before they all give up? It would seem that the botnet approach can scale better in mass upgrades. :/

Please excuse my technical

Please excuse my technical ignorance w.r.t. Tor, but is it possible this isn't a botnet at all, just a natural spike caused by all the bad publicity around NSA surveillance recently, causing millions more people to turn to Tor for secure Internet use?

It is possible. They're

It is possible. They're anonymous after all. But at this point I think it's unlikely.

Relaying should be enabled

Relaying should be enabled by default in the next version, with a popup to disable it that all human users will see, but bots will suppress, that way if the pots update tor they will automagicaly solve their own issue, although at a bandwith cost to people with an outdated antivirus.

this explains some promlems

this explains some promlems ive been having with tor since august 20. i hope whoever this asshole is realizes hes causing a dos for tor users

Hidden services were working

Hidden services were working again for me after the .17 was released, but now all hidden services (well, the ten or so I've tried) are inaccessible again.

Is there any evidence that the botnet operator has upgraded his bots to 0.2.4.x?

Not at present.

Not at present.

Erlang on Xen

Erlang on Xen (erlangonxen.org) can create many (hundreds per physical host) small Xen instances on demand (startup latency = dozens milliseconds). Each instance can handle a single connection or it may handle a hundred. It looks like a perfect fit for Tor and its ilk.

Even millions. OS doesn't

Even millions. OS doesn't set its limits.

Bot using tor was already

Bot using tor was already there in 2012. But not so widespread.

http://threatpost.com/tor-powered-botnet-linked-malware-coder-s-ama-red…

https://community.rapid7.com/community/infosec/blog/2012/12/06/skynet-a…

Couldn't the solution work in this way?

Solution could be captcha as stated above. If the client would like to use tor and is not going to become relay (he is nothing offering to network) he has to accept all challanges that are necessary. Lowest priority could be assigned for requests from such client.

If the client is relay probably does not need captcha, because he is helping network, but shall not consume more resources that he is offering. This could be hard to measure. Some reputation could be used by nodes to priorities the most helpful neighbor nodes. So the quality of service provided from network could depend on what the client is offering back to network.

Probably the above could work also without captcha, to prioritize the requests according the reputation (service offered back to network). But the prioritization shall be done from the very first request.

Sorry, my english is bad.

Sorry, my english is bad. This page is very nice

O

Comments from

Comments from "throwaway236236" (malware coder) related to Tor powered botnet. (published 1 year ago)

http://www.reddit.com/r/IAmA/comments/sq7cy/iama_a_malware_coder_and_bo…

"Tor uses Curve25519

"Tor uses Curve25519 designed by Daniel J. Bernstein not the NSA"...

Hmmm! Maybe by the MOSSAD then ? I'm not sure I'd prefer the latter...

I still believe the

I still believe the Torproject is wasting precious resource - men and money - in working on the browser. IMO your point should be to advanceonion routing, not distribute a browser. Sure you want to be advising your users how to best configure/customise their network applications (not just browser here!) for safe and proper use with Tor, like you've always been doing. But why on Earth devote so much attention to a particular version of one browser, rather unbearable at that, is beyond me. Let Mozilla bury themselves, no need to help them. And overall, leave it to your users to choose their tools. The need for browser uniformity over the Tor network is a non sequitur, security wise - it makes it easier for an attacker to exploit any flaw.

I would sure like to spend

I would sure like to spend less energy paying attention to browser-level (application-level) security.

The trouble is that there *is* no advice we can give you on how to configure your normal browser to use Tor safely. Or to say it even more clearly, we know of good anonymity-breaking attacks on all browsers that aren't Tor Browser, and there's no way to configure them away.

That's the reason for the escalation in attention to the Tor Browser Bundle.

(We could resolve some, but not all, of the issues by putting the browser in its own VM. A lot of work remains to get that part right, safe, and audited. It would be great for people to work more on that too.)

"Or to say it even more

"Or to say it even more clearly, we know of good anonymity-breaking attacks on all browsers that aren't Tor Browser, and there's no way to configure them away."

I trust you do - but how many of those attacks remain effective against light "3.2"-style browsing - no local scripting, no Java and so on ? Plus basic precaution against leaks through browser headers, DNS and a few others;

In Windows we can use the "Off By One" browser for instance hxxp://offbyone.com . Do the kind attacks you were referring to apply to it ?

Read https://www.torproject.o

Read

https://www.torproject.org/torbutton/en/design/

and

https://www.torproject.org/projects/torbrowser/design/

to get a sense of the sorts of attacks you should consider.

Seems the User Count

Seems the User Count decreases by now. Many RLays updated to the new Version.

What about Orbot? According

What about Orbot? According to the Google play store it has been installed over a million times. Android systems are run world wide not restricted to any country. Most android users probably dont create any traffic after login in. If a change had been made in August to always keep it running in the back ground then it could it possibly be the culprit?

This guy has his botnet on

This guy has his botnet on standby and ready. Hes probably reading all this with a smirk. almost time to destroy Tor is what hes thinking.

in the next update implement some system that requires captcha with the ability for you nerds to turn it on or off and adjust the frequency. Or at the least how about a captcha just to download and update from torproject.org or built in when first time running.

just once is all you need.

As this appears to be a

As this appears to be a botnet that effects Windows machines only -

http://blog.fox-it.com/2013/09/05/large-botnet-cause-of-recent-tor-netw…

just ban Windows from the Tor network.

No one who wants anonymity should go near any Microsoft product anyway.

Any currently accessible,

Any currently accessible, updated, index/start point/search engine for dot onion sites and services ?

As I understand, there are

As I understand, there are many new relays (middle nodes) as well as new clients. As I understand, an adversary who monitors a large fraction of relays can attempt certain deanonymizing attacks on Tor users.

On 13 August, Roger Grimes wrote at

http://www.infoworld.com/d/security/anonymous-not-anonymous-224783

the following (see page two):

If I was interested in invading Tor's privacy, I would create a very large cloud of computers that would make up most of Tor's network. They could even ensure that your traffic would only be routed on owned Tor computers by manipulating where future Tor packets go once they enter the owned segment.

Comments?

No, there aren't many new

No, there aren't many new middle nodes. Full stop.

Other than that, yes, this attack could work if somebody launched it and we didn't notice it. The larger the Tor network gets, the less impact an attacker of a given size can have. The Tor network is still tiny compared to what it needs to be.

Question

Question 1:

https://www.ssllabs.com/ssltest/analyze.html?d=blog.torproject.org

Any comments?

My best guess is that while perfect forward secrecy is not supported by default, it may be supported if user connects using TBB/Tails, because their browsers are based on FF ESR 17.0.7

For the future: will the Tor Project work with ssllabs.com to include current TBB/Tails versions in their test suite for Windows/Apple/Linux?

Question 2:

I believe that the smallest RSA exponent allowed by the NIST standard is 2^16+1. Furthermore, every certificate (PEM) I have seen uses this minimal allowed exponent, which clearly has a very special form wrt number theory. I understand the rationale for using an exponent with small Hamming weight for faster decryption, but could this be another instance of NSA subtly trying to ensure that everyone adopts a poorly chosen exponent? On the other hand, making changes might be dangerous if one has no expert advice. What do RSA (either the authors or the company) say about this issue?

Any comments?

NSA botnet to make tor

NSA botnet to make tor unusable when zombies will start talking? can it be?

Seems unlikely. If they

Seems unlikely. If they wanted to do that there's no reason to do this earlier step first.

The botherders are actually

The botherders are actually known: http://blog.trendmicro.com/trendlabs-security-intelligence/the-mysterio…

okay, is there anything we

okay, is there anything we can do to stop this shit going ? Hackers putting kids at great risk, because pedos can't fap so sooner or later they may run out of resources they got and start going out public....

Is a botnet. Cleaned out a

Is a botnet. Cleaned out a computer and it had tor.exe running... with just the tor.exe. I don't take them for security/privacy-oriented people like us.

Can you report any details

Can you report any details about what it was, what else it did, etc?

Has anything like this been

Has anything like this been observed on a *nix machine? I doubt it...

This particular bot?

This particular bot? No.

Vulnerable software in general? Sure.

Linux/Unix isn't a magical fix for everything. But that said, I will certainly acknowledge that every time we have a largescale problem like this, it's targetting Windows users...

Please just don't make it so

Please just don't make it so complicated. Just install a captcha and deprecate the current version, stop accepting connections from it, except to the page that tells users to update. Any real human will update, the bots won't. Problem solved.

How awfully selfish and ugly

How awfully selfish and ugly the botnet people are ruining the tor for all the people! Considering what Tor is, really IMO, need to develop serious defense mechanism(s) to protect itself from such.

I guess we all know the

I guess we all know the origin now.

FBI Admits It Controlled Tor Servers Behind Mass Malware Attack

http://www.wired.com/threatlevel/2013/09/freedom-hosting-fbi/

For a better explanation of how this was achieved (by generating millions of nodes):

https://news.ycombinator.com/item?id=6383447

What? No, this combination

What? No, this combination of news articles sounds like nonsense.

Perhaps our friend Kevin has confused you by picking an intentionally ambiguous word 'servers' in his title.

Apparently the FBI was behind this attack:

https://blog.torproject.org/blog/tor-security-advisory-old-tor-browser-…

which has nothing to do with the botnet attack.

Countries used to stockpile

Countries used to stockpile nukes, now they stockpile servers.

Does anyone really think all of those datacenters in Utah are being used just to store and analyze Internet traffic?

A server that stores data can easily be converted into a server that transmits data. And if you include OS virtualization,

you could probably increase your botnet army by 100x fold overnight.

The cool thing about botnets

The cool thing about botnets is the wide variety of internet connections they have. It's hard to replicate that from one building in Utah. Or said another way, that one building in Utah doesn't get you very far if that's your goal.

Why not develop a simple

Why not develop a simple active-response signaling protocol between the nodes and ban the whole network from where these zombies are connecting, even if it's an entire network class?

Identifying them might be tricky though.

I'm also not understanding why Tor isn't only focusing on securing and anonymizing the HTTP protocol and it's dependent protocols like DNS? Active content on the internet is breaking the anonymity anyways, for chat there are specialized software packages out there and for any other use users should be free to secure their connections their own way (P2P has already some ways).

I believe it's impossible for Tor to get rid of these kind of abuses if it stays open for everything. It won't be able to scale and substitute the entire internet. And, compromising security because of some retards playing with it is inadmissible!

Leave smtp available too and

Leave smtp available too and yes, filter all the rest. Better to have some few vital services working than to not be able to do anything because of these kind of attacks.

Temporarily banning them is

Temporarily banning them is not such a bad idea, also publishing the address pools on the tor webpages would be cool.

Create a section called Socially Alienated Tor Users and update it with the networks from where the bots are connecting from and causing DoS attacks on tor, that will also attract the attention of the administrators form the related ISPs and they'll be forced to take action too.

Seems like coordinating to

Seems like coordinating to post addresses of some Tor users would be bad precedent, yes?

That said, there's another lesson to be had here in terms of how Tor doesn't provide good anonymity for five million clients who are all doing the same thing and can be distinguished (partitioned).

¿Isn't it worrying that

¿Isn't it worrying that after a month with the bot out there, only around 500 relays have been updated to 0.2.4 since it came out?

It seems that any bolder action to "disable" the bot would be very slow to put into practice.

Most of the fast relays have

Most of the fast relays have upgraded though. We're at way over 50% upgrade rate, if you count by capacity.

See also https://trac.torproject.org/projects/tor/ticket/9777 plus the tor-dev post it links to.

FBI and Silk Road sting.

FBI and Silk Road sting. 'Nuff said?

Hi, i just joined so hello.

Hi, i just joined so hello. Im 48 and my son bought me a PlayStation 4, ive not played computer games since the ZX Spectrum in the early 80's.

My son downloaded a game called Battlefiled 3 or 4. Im suprised how good it all looks.

Am I the worlds oldest video gamer?

Hi, i just joined so hello.

Hi, i just joined so hello. Im 48 and my son bought me a PlayStation 4, ive not played computer games since the ZX Spectrum in the early 80's.

My son downloaded a game called Battlefiled 3 or 4. Im suprised how good it all looks.

Am I the worlds oldest video gamer?